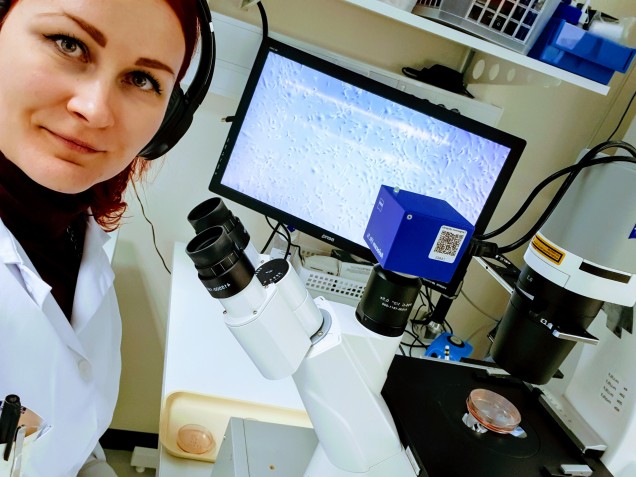

By: Elisa Vuorinen

I am what you might call a hard-core experimental bioscientist.

I thrive in a white coat pipetting minimal amounts of colorless liquids or peeking through a microscope. You want an elaborate series of laboratory experiments planned and executed? I’m your gal. 🙂

However, the world of biological research has been shifting for a while now and more and more research is conducted on computers and servers, in clouds. Where there are laboratory experiments, there’s data to be analyzed, and this data is getting quite massive and can no longer be managed in Excel sheets – the time we are living in is not called “the era of Big Data” for nothing. Furthermore, why perform the laboratory experiments and generate the data yourself if someone else has already done more than you would ever have resources for? You can just obtain their data and analyze it from your point of view! Bioinformaticians and data scientists analyzing the data are the key players here – and from a lab rat’s perspective, much of what they do seems like actual rocket science.

A few years back I was a PhD student finishing up my doctoral thesis and thinking of what to do next, when a position for a postdoctoral researcher in Matti Nykter’s Computational biology research group opened up. The position was for an experimental scientist but within a group whose core expertise lies in the analysis of biological data. What an amazing set up to learn new things, I thought, I applied and was selected.

In our group, experimental scientists work side by side with bioinformaticians. Roughly, the division of labor goes along the lines that experimentalists perform lab work and generate the biological data (for example genetic sequencing data from a specific set of samples), then provide the data to bioinformaticians who do the analysis. The results are then interpreted together to obtain biologically relevant insights.

Seems straightforward, eh? 🙂

That’s how we get where we are now. It had been discussed in our group for a while that the experimental scientists should learn to do some basic high-throughput analyses themselves so as not to be as dependent on the bioinformaticians, or at least get a glimpse of the world of data analysis to facilitate more effective collaboration. From words to actions, and just before Christmas 2019, we ended up locked up in a windowless basement with our laptops for a day, learning the basics of UNIX operating system for starters. Thank god for peer support. Meanwhile, the group’s real bioinformaticians fine-tuned existing data analysis pipelines. The happening was called coding camp to make it sound fun. (Well ok, honestly speaking the venue was a quite nice sauna space in Tampere city center.)

Since then, the experimental scientists in our group have gathered together once every two weeks or so to continue the coding camp effort. A set of exercises to be finished is agreed upon and then after completion we discuss the problematic points. So far, we have covered the basics of UNIX operating system and RNA and whole genome sequencing data quality control and analysis, performed on servers.

I think since the first coding camp, the unprintable words uttered when doing the coding exercises have since decreased and everyone has gotten at least a slight hang of it. Some of us might even – pause for dramatic effect – enjoy it. It remains to be seen if we can actually apply our new skills to real data, as we have been mostly working with simulated, optimal data at the moment. But in general, researchers that feel at home outside of their comfort zone, tend to be apt at absorbing new information and are resilient, learning everything we set our sights into.